The conversation about data quality for AI in pharma has shifted. It is no longer an upstream IT concern or a compliance checkbox. It has become the single largest determinant of whether artificial intelligence delivers value in regulated environments — or introduces risks that regulators, auditors, and quality teams are increasingly unwilling to absorb.

In practice, pharma AI readiness now depends less on model sophistication than on whether underlying regulated data is fit for purpose, traceable, and governed for reuse.

Why AI struggles in regulated pharma environments

Regulatory and product information is reused across submissions, markets, and lifecycle stages, often with subtle contextual differences. When that information is scattered across documents and systems, AI systems cannot reliably determine what is authoritative.

This creates practical problems. AI-generated outputs may be difficult to explain. Conflicting inputs lead to conflicting results. Provenance becomes unclear, making validation and approval more complex rather than less. In regulated contexts, any tool that increases ambiguity increases risk.

The issue is not that AI is unsuitable for pharma. It is that AI requires a level of data discipline many organizations have not yet achieved—particularly around traceability, consistency, and lifecycle control, which regulators increasingly expect to be demonstrable rather than reconstructed retrospectively. FDA’s January 2025 draft guidance on AI for drug and biological regulatory decision-making reinforces this by requiring sponsors to define a model’s context of use and apply a risk-based credibility assessment framework.

Data quality is not about accuracy alone

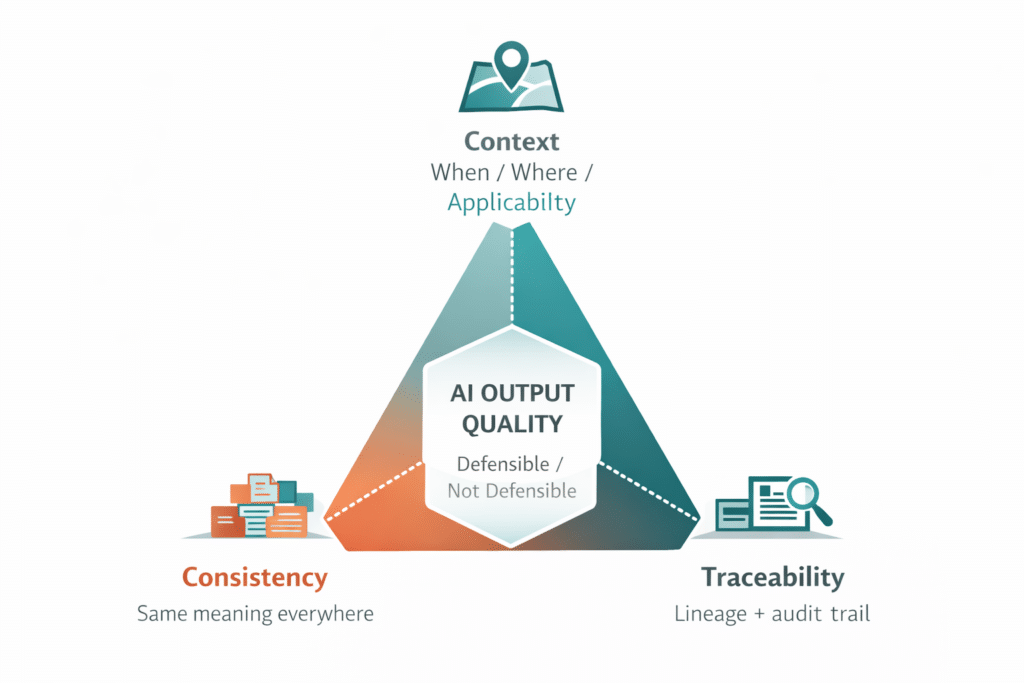

Data quality is often reduced to correctness. Is the information accurate? Has it been approved? In reality, quality in regulated environments is multidimensional. High-quality data must also be consistent, traceable, and contextual.

It must also be structured and described well enough for systems to determine intended use, approval state, lineage, and reuse constraints at the data-element level.

Consistency: the same meaning, everywhere it appears

Consistency ensures the same data conveys the same meaning wherever it is used. In pharma, the same indication, dosing instruction, or safety statement may appear across a CCDS, regional labels, IFUs, ePI artifacts, and submission modules. If those instances diverge — even by a word — AI cannot determine which is authoritative, and downstream outputs inherit the conflict. Consistency is what allows reuse to operate as a control rather than a risk.

Traceability: lineage that can be defended under inspection

Traceability allows organizations to explain where information came from and how it changed over time. Every data element carries a record of its origin, approval status, and revision history — the conditions a regulator expects to see reconstructed during inspection. For AI, traceability converts an opaque output into a defensible one, because the source and lineage of every input can be produced on demand. Without it, AI outputs cannot be validated, and validated outputs cannot be approved.

Context: when, where, and how data should be applied

Context defines when, where, and how data should be applied. The same molecule entry may be appropriate for one market and inappropriate for another; the same warning may apply to one indication and not to a related one. AI cannot infer these boundaries from the data alone — they must be encoded as metadata, governance rules, and reuse constraints. Context is what prevents AI from producing outputs that are technically accurate but operationally wrong. AI depends on all three. Without them, outputs may be technically correct but operationally unusable or regulatorily indefensible.

These three dimensions are not abstractions. They map directly to the ALCOA+ principles — attributable, legible, contemporaneous, original, accurate, complete, consistent, enduring, and available — used by MHRA in its GxP data integrity guidance as a practical benchmark for whether pharmaceutical data is governed well enough to support regulated decisions. For AI, the implication is straightforward: data that cannot be attributed, traced, and governed consistently is difficult to defend once it begins informing automated outputs.

Poor data quality is rarely visible in static workflows. It becomes obvious only when systems attempt to automate interpretation and reuse at scale.

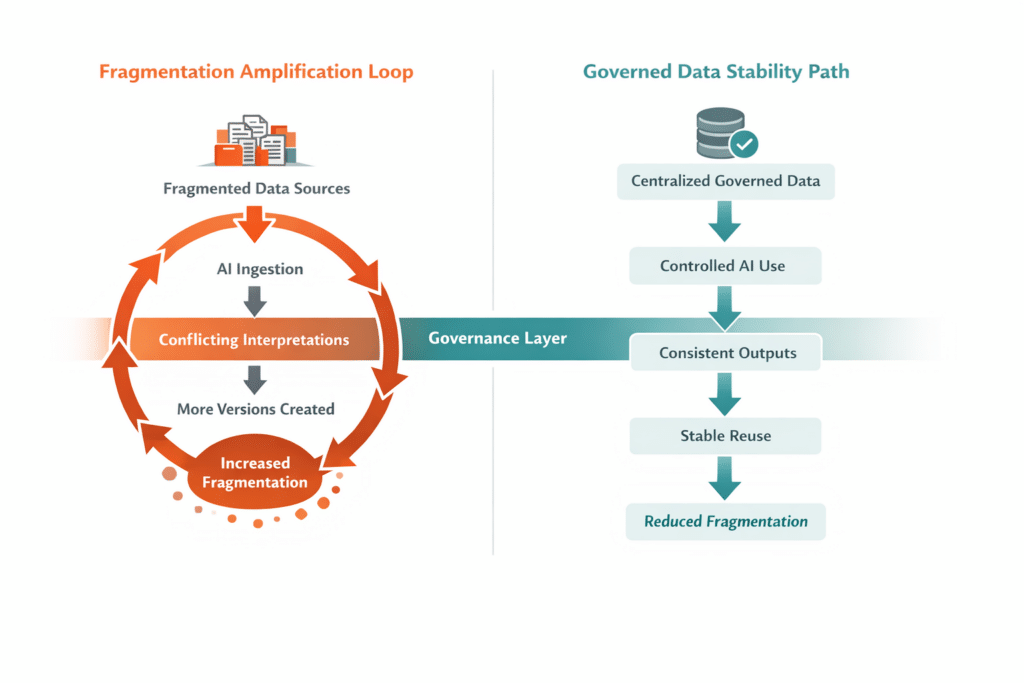

Why AI amplifies fragmentation data instead of fixing it

There is a common misconception that AI can resolve fragmentation by “figuring it out.” In practice, AI does the opposite. When multiple versions of similar data exist, AI has no inherent way to select the correct one unless governance rules already exist. It may blend inputs, prioritize recency over approval status, or generate outputs that appear plausible but lack regulatory defensibility.

This is especially dangerous in lifecycle scenarios where small wording differences carry significant implications. AI does not understand regulatory nuance unless that nuance is embedded in the data itself. Without a controlled, authoritative foundation, AI accelerates divergence rather than convergence.

AI does not fix fragmentation. It makes fragmentation visible—and operationally costly.

This is precisely why both the FDA and EMA now treat data quality as inseparable from AI credibility. The FDA’s January 2025 draft guidance on AI in regulatory decision-making for drugs and biological products introduces a risk-based credibility assessment framework tied to a defined context of use. EMA’s reflection paper on AI in the medicinal product lifecycle likewise emphasizes that AI in medicines is inherently data-driven, that risks to reliability and bias must be actively mitigated, and that AI use must remain coherent with existing legal and regulatory requirements.

Centralized data as the prerequisite for safe AI use

Safe and effective AI in pharma begins with centralized data management. A single authoritative foundation ensures that AI systems draw from validated, governed information.

It also gives that information the metadata needed to make approval state, jurisdiction, intended use, and reuse constraints machine-readable rather than implicit.

This is the operational expression of the FAIR principles — findable, accessible, interoperable, and reusable — applied to centralized data management in life sciences: data and metadata that AI can locate, interpret, and act on without inheriting the ambiguity that fragmented systems impose.

Centralization does not limit AI capability. It enables it. When data is authoritative, traceable, and contextual, AI can assist with drafting, analysis, and impact assessment without introducing unacceptable risk. Automation becomes an extension of existing governance rather than a threat to it.

Without centralized, governed data, AI adoption stalls at pilots because trust cannot scale.

How Docuvera provides the data foundation AI depends on

Docuvera is designed to support pharma AI readiness by championing centralized data management for life sciences — a single, governed source of truth for regulated content in environments where traceable data is a regulatory expectation, not a feature. Rather than layering AI on top of fragmented artifacts, Docuvera ensures that regulated data is managed as a coherent, authoritative foundation before automation is introduced.

Approval status, lineage, and reuse context — built in

Within Docuvera, approval status, lineage, and reuse context carry with the data itself. That foundation is what makes AI data governance in pharma operational rather than aspirational.

This allows AI-assisted processes to operate within clear boundaries. Outputs can be traced back to their source. Human oversight remains intact. AI accelerates work without undermining compliance.

Docuvera does not position AI as a shortcut. It positions AI as a multiplier for disciplined data practices.

What changes when AI operates on trusted data

When AI draws from high-quality, centralized data, its value becomes tangible. Drafting accelerates without introducing inconsistency. Impact analysis becomes more reliable because dependencies and reuse relationships are visible. Localization and adaptation become safer because the global core remains stable.

Trust as a measurable outcome, not a stated value

Equally important, confidence increases. Regulatory and operations teams trust AI-assisted outputs because they understand their provenance. Review focuses on judgment rather than verification. AI becomes a tool for scale, not a source of uncertainty.

These benefits are structural, not experimental. They persist because the underlying data behaves predictably across contexts and over time.

Why AI readiness is an operating model decision

Pharma AI readiness is often framed as a technology capability. In reality, it is an operating model decision. Organizations must decide whether they want AI to operate on fragmented artifacts or on governed data assets.

Those who choose the former will struggle to move beyond experimentation. Those who choose the latter will find AI increasingly valuable as data volume and complexity grow. The difference lies not in model sophistication, but in data discipline and governance by design.

The regulatory environment is moving in the same direction. The EU AI Act (Regulation (EU) 2024/1689) establishes a risk-based framework for AI, with high-risk obligations applying to certain systems and to some AI-enabled products already regulated under sectoral regimes such as medical devices. In parallel, the European Commission’s consultation on new GMP Annex 22 for Artificial Intelligence in pharmaceutical manufacturing explicitly addresses intended use, training data quality, validation, performance monitoring, and human review. Pharma AI readiness is no longer just a forward-looking ambition; it is increasingly becoming a documented expectation.

AI exposes what organizations already know about their data

AI does not create new problems. It reveals existing ones faster. Organizations that experience difficulty adopting AI are often encountering long-standing data issues that manual processes previously masked. In this sense, AI is diagnostic as much as it is transformative.

The same documentation, electronic-record, audit-trail, and data-integrity expectations that have long shaped regulated operations now carry greater consequence when data is reused to train, inform, or constrain AI systems. The burden is no longer just to store records compliantly, but to ensure governed data can withstand automated reuse.

This is why investing in centralized, governed data management is a prerequisite for AI success. It addresses foundational issues that no algorithm can solve on its own and aligns with quality system expectations for controlled, scalable operations.

AI succeeds when data is allowed to behave well

Pharma organizations that succeed with AI will not be those with the most advanced models, but those with the most disciplined data foundations. High-quality, centralized, traceable data allows AI to accelerate work without increasing risk.

That is the core of data integrity for AI in pharma: not cleaner records alone, but governed data that remains reliable across reuse, review, and regulatory scrutiny.

Whether framed as ALCOA+ for AI in pharma, FAIR-aligned regulatory data quality, or pharma data governance by design, the underlying requirement is the same: data that is fit to be trusted, fit to be reused, and fit to be defended.

Docuvera provides the infrastructure needed to reach that state. By ensuring that regulated data is authoritative and reusable, it creates the conditions under which AI can be applied responsibly and at scale. AI will not fix bad data. But good data will allow AI to deliver on its promise.

See how Docuvera operationalizes governed, AI-ready data for pharma teams. Explore the platform or talk to our team about your structured content strategy for regulated, reusable content.

Why trust this perspective

This article is grounded in current FDA, EMA, MHRA, and European Commission guidance relevant to AI, regulated data, and data integrity in life sciences. It also reflects Docuvera’s focus on structured, governed content for regulated documentation workflows.